Introduction

xlOptimizer implements an enhanced version of Particle Swarm Optimization (PSO), a a well-known swarm intelligence algorithm suitable for global optimization. The method, introduced by Kennedy and Eberhart [1], mimics the social behavior of flocks of birds and swarms of insects.

How does it work?

PSO has many similarities with other algorithms such as Genetic Algorithms (GA). At first, the population (or "swarm") is initialized with random solutions. However, unlike GA, PSO has no selection or evolution operators such as crossover and mutation; in PSO the members of the swarm (or "particles") "fly" through the problem space following simple mechanics. Thus, PSO searches for optima by updating each particle according to notions such as position, velocity, inertia etc. This movement of particles is not entirely random; each particle is attracted towards both its own personal best position and the swarm's best position.

Mathematical formulation

We assume that the population consists of p individuals. Within the Design Space (DS) each individual is characterized by its position and velocity, determined at time instant k by the corresponding vectors xk and vk. Initially, the particles are distributed randomly in the box-constrained search space, as follows:

$${{\bf{x}}_{LB}} \le {\bf{x}}_0^d \le {{\bf{x}}_{UB}}{\rm{ }}\forall d \in \left\{ {1,2,...,P} \right\}$$

where, xLB and xUB = vectors defining the lower and upper values of the variables, respectively. The initial velocities of the particles are also selected randomly:

$$ - {\bf{v}}_0^{\max } \le {\bf{v}}_0^d \le {\bf{v}}_0^{\max }{\rm{ }}\forall d \in \left\{ {1,2,...,P} \right\}$$

where,

$${\bf{v}}_0^{\max } = \gamma \left( {{{\bf{x}}_{UB}} - {{\bf{x}}_{LB}}} \right)$$

The position vector of individual d at the next time instant k+1 is given as:

$${\bf{x}}_{k + 1}^d = {\bf{x}}_k^d + {\bf{v}}_{k + 1}^d$$

where the time step Δt between the distinct time instants is assumed to be equal to unity. The velocity vector vdk+1 is given as:

$${\bf{v}}_{k + 1}^d = {w_k}\;{\bf{v}}_k^d + {c_1}\;{{\bf{r}}_1} \circ ({\bf{p}}_k^d - {\bf{x}}_k^d) + {c_2}\;{{\bf{r}}_2} \circ ({\bf{p}}_k^g - {\bf{x}}_k^d)$$

where, wk = inertia factor at time instant k; c1, c2 = cognitive and social parameters of the algorithm, respectively; pdk = best ever position vector of individual d at time instant k; pgk = best ever position vector among all individuals at time instant k; r1, r2 = vectors of random variables with uniform distribution in the interval [0,1] and the (o) operator indicates element-by-element multiplication. The simple PSO algorithm imposes no limitations with regard to the maximum velocity of the particles.

The enhanced PSO variant is based on [3], as follows:

- The initial maximum velocity of the individuals is evaluated so that in one time step an individual may travel up to a certain fraction of the search space:

$${\bf{v}}_0^{\max } = \gamma \left( {{{\bf{x}}_{UB}} - {{\bf{x}}_{LB}}} \right)$$

where, γ = scalar parameter.

- If the best solution found in the whole swarm is not improved over a period of h consecutive steps, then it is assumed that the velocities are large and the algorithm cannot locate better solutions due to overshooting. For this reason, both the inertia factor and the maximum velocity are decreased as follows:

\[{\text{If }}f({\mathbf{p}}_k^g) = f({\mathbf{p}}_{k - h}^g){\text{ then }}\left\{ {\begin{array}{*{20}{c}}

{{w_{k + 1}} = a{\text{ }}{w_k}} \\

{{\mathbf{v}}_{k + 1}^{\max } = \beta {\text{ }}{\mathbf{v}}_k^{\max }}

\end{array}{\text{ }}} \right.{\text{else }}\left\{ {\begin{array}{*{20}{c}}

{{w_{k + 1}} = {w_k}} \\

{{\mathbf{v}}_{k + 1}^{\max } = {\mathbf{v}}_k^{\max }}

\end{array}{\text{ }}} \right.\]

where, α, β < 1.

- The craziness operator assigns a random velocity vector to an individual resulting in its moving away from the swarm and thus exploring other regions of the search space. The operator is activated with a probability Pcr as follows:

\[{\text{If }}r < {P_{cr}}{\text{ then randomly assign }}{\mathbf{v}}_{k + 1}^m{\text{ with - }}{\mathbf{v}}_{k + 1}^{\max } \leqslant {\mathbf{v}}_{k + 1}^d \leqslant {\mathbf{v}}_{k + 1}^{\max }\]

where r = random variable with uniform distribution in the interval [0,1].

- The algorithm employs both an elite particle and an elite velocity. The individual with the worst performance is moved to the best ever position of the swarm. In addition, if the velocity vector vdk resulted in an improvement of the global best position, then:

\[{\mathbf{x}}_{k + 1}^d = {\mathbf{p}}_k^g + {c_3}{\text{ }}{{\mathbf{r}}_3} \circ {\mathbf{v}}_k^d\]

where, c3 = parameter of the algorithm and r3 is a vector of random variables with uniform distribution in the interval [0,1].

Usual parameter values for small to medium-sized problems are the following: (a) population size p=20-30, (b) cognitive parameter c1=0.5, (c) social parameter c2=1.6, (d) constant inertia factor w=0.8, (d) maximum velocity coefficient γ=0.4, (e) initial inertia factor w0=1.40, (f) maximum steps without improvement h=3, (g) fraction for the decrease of the inertia factor α=0.99, (h) fraction for the decrease of maximum velocity β=0,95, (i) craziness factor Pcr=0.22, (j) elite velocity factor c3=1.30. [2], [3].

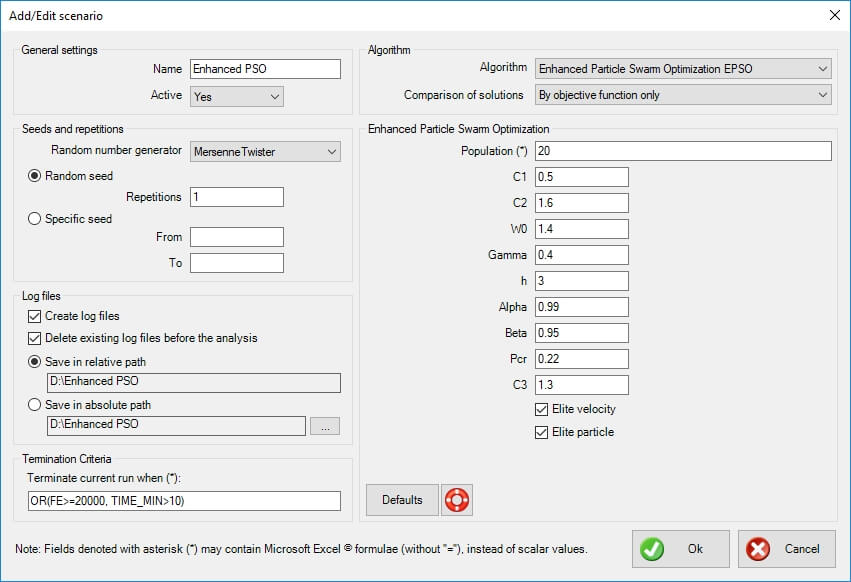

Options in xlOptimizer add-in

The following options are available in xlOptimizer add-in. Note that whenever an asterisk (*) is indicated in a text field, this means that the field accepts a formula rather than a certain value. The formula is identical to Microsoft Excel's formulas, without the preceding equal sign '='. Also, the function arguments are always separated by comma ',' while the dot '.' is used as a decimal point.

General settings

- Name: the name of the scenario to be run. It should be descriptive but also concise, as a folder with the same name will be (optionally) created, which will hold the log files for further statistical analysis.

- Active: select whether the scenario is active or not. If it is active, it will be run in sequence with the other active scenaria. In the opposite case, it will be ignored. This is very helpful when you experiment with settings.

Seeds and repetitions

Metaheuristic algorithms are based on random number generators. These are algorithms that produce a different sequence of random numbers for each seed they begin with. A seed is just an integer, and a different seed will produce a different evolution history with a different outcome. Robust metaheuristic algorithms should, on average, perform the same no matter what the seed is.

- Random number generator: select the random number generator to be used. The Mersenne Twister is default. The following options are available:

- NumericalRecipes: Numerical Recipes' [4] long period random number generator of L’Ecuyer with Bays-Durham shuffle and added safeguards

- SystemRandomSource: Wraps the .NET System.Random to provide thread-safety

- CryptoRandomSource: Wraps the .NET RNGCryptoServiceProvider

- MersenneTwister: Mersenne Twister 19937 generator

- Xorshift: Multiply-with-carry XOR-shift generator

- Mcg31m1: Multiplicative congruential generator using a modulus of 2^31-1 and a multiplier of 1132489760

- Mcg59: Multiplicative congruential generator using a modulus of 2^59 and a multiplier of 13^13

- WH1982: Wichmann-Hill's 1982 combined multiplicative congruential generator

- WH2006: Wichmann-Hill's 2006 combined multiplicative congruential generator

- Mrg32k3a: 32-bit combined multiple recursive generator with 2 components of order 3

- Palf: Parallel Additive Lagged Fibonacci generator

- Random repetitions: use this option if you wish the program to select random seeds for every run. Also, select the number of repetitions. If you wish to perform a statistical analysis of the results, a sample of 30 runs should be sufficient.

- Specific seed: use this option if you wish to use a selected range of integers as seeds. Note that seeds are usually large negative integers.

Log files

- Create log files: select whether you wish to create a log file for each run. This is imperative if you wish to statistically analyze the results. If you are only interested in the best result out of a certain number of runs, disabling this will increase performance (somewhat).

- Delete existing log files before the analysis: select this option if you want the program to delete all existing (old) log files (with the extension ".output") in the specific folder, before the analysis is performed.

- Save in relative path: select this option to create and use a folder relative to the spreadsheet's path. The name of the folder is controlled in the General Settings > Name.

- Save in absolute path: select this option to create and use a custom folder. Press the corresponding button with the ellipses "..." to select the folder.

Termination criteria

Each scenario needs a specific set of termination criteria. You can use an excel-like expression with the following variables (case insensitive):

- 'BEST_1', 'BEST_2', etc represent the best value of the objective function 1, 2, etc in the population.

- 'AVERAGE_1', 'AVERAGE_2', etc represent the average value of the objective function 1, 2, etc in the population.

- 'WORST_1', 'WORST_2', etc represent the worst value of the objective function 1, 2, etc in the population.

- 'MIN_1', 'MIN_2', etc represent the minimum value of the objective function 1, 2, etc in the population.

- 'MAX_1', 'MAX_2', etc represent the maximum value of the objective function 1, 2, etc in the population.

- 'FE' represents the function evaluations so far.

- 'TIME_MIN' represents the elapsed time (in minutes) since the beginning of the run.

- 'BEST_REMAINS_FE' represents the number of the last function evaluations during which the best solution has not been improved.

The default formula is 'OR(FE>=20000, TIME_MIN>10)' which means that the run is terminated when the number of function evaluations is more than 20000 or the run has lasted more than 10 minutes.

Algorithm

- Algorithm: select the algorithm to be used in the specific scenario. In this case 'Enhanced Particle Swarm Optimization' is selected.

- Comparison of solutions: select whether feasibility (i.e. the fact that a specific solution does not violate any constraints) is used in the comparison between solutions. The following options are available:

- By objective function only: feasibility is ignored, and only the objective function (potentially including penalties from constraint violations) is used to compare solutions.

- By objective function and feasibility: the objective function (potentially including penalties from constraint violations) is used to compare solutions, but in the end, a feasible solution is always preferred to an infeasible one.

Enhanced Particle Swarm Optimization

The following options are specific to Standard Genetic Algorithm.

Population

Enter a formula for the calculation of the population size. You can use an excel-like expression with the following variables (case insensitive):

- 'VARS' represents the number of variables (i.e. the dimensionality of the problem).

The default setting is '20'.

C1

Select an appropriate value for the cognitive parameter c1. This controls the weight of the pull of each particle to the best ever position of the same particle. The default value for EPSO is 0.5.

C2

Select an appropriate value for the cognitive parameter c2. This controls the weight of the pull of each particle to the best ever position of the whole swarm. The default value for EPSO is 1.6.

W0

Select an appropriate value for the inertia factor w0. This controls the inertia of the particle. The default value for EPSO is 1.4.

Gamma

Select an appropriate value for the gamma factor γ. This controls the initial maximum velocities of the particles, as the fraction of the design space (per variable) that can be traveled in one time step. The default value is 0.4.

h

Select an appropriate value for parameter h (consecutive steps without improvement of the global optimum). If more than h steps are observed without improvement, then it is assumed that the velocities are large and w=alpha*w, vmax=beta*vmax. Use zero to disable. Usual value is 3.

Alpha

Select an appropriate value for parameter alpha. If the h-parameter is activated, the inertia parameter is decreased according to w=alpha*w. Use 1 to disable. Usual value is 0.99.

Beta

Select an appropriate value for parameter beta. If the h-parameter is activated, the maximum velocities are decreased according to vmax=beta*vmax. Use 1 to disable. Usual value is 0.95.

Pcr

Select an appropriate value for parameter Pcr. This is the 'craziness' probability. When activated, the velocity of a particle is initialized randomly. It is a safeguard against premature convergence. Use 0 to disable. Usual value is 0.22.

Elite velocity

If the velocity of a particle improved the best ever position of the swarm, then it is used again, multiplied by a random number in [0.1] and also multiplied by parameter C3.

C3

Select an appropriate value for the elite velocity parameter c3. Usual value is 1.3.

Elite particle

When selected, the worst particle in each step is transferred to the best ever position of the swarm.

References

[1] Kennedy J, Eberhart RC. Particle swarm optimization. In: Proceedings, of IEEE International Conference on Neural Networks IV, Perth Australia, IEEE press Piscataway, NJ,1995; p. 1942–1948.

[2] Charalampakis, A. E., Dimou, C. K., “Identification of Bouc–Wen Hysteretic Systems using Particle Swarm Optimization”, Computers and Structures, 88 (2010): 1197–1205, doi:10.1016/j.compstruc.2010.06.009.

[3] Fourie PC, Groenwold AA. The particle swarm optimization algorithm in size and shape optimization. Struct Multidisc Optim 2002;23(4):259–267.

[4] Press, W.H., Teukolsky, S.A., Vetterling, W.T. and Flannery, B.P. (2002) Numerical recipes in C++: the art of scientific computing. Cambridge University Press.